March Madness Selection Process: RIP to the RPI? Not so fast.

By Sascha Paruk in College Basketball

Updated: January 17, 2018 at 9:39 am ESTPublished:

This is part one of a two-part series in which I’ll be exploring the current system used by the NCAA Tournament Selection Committee to (a) pick the at-large teams for the bracket and (b) seed the 68-team field. In part one, I look at the main flaw in the current at-large selection process and make some predictions both for this year’s bracket and future changes to the system.

“Hope is itself a species of happiness, and, perhaps, the chief happiness which this world affords.” – Samuel Johnson

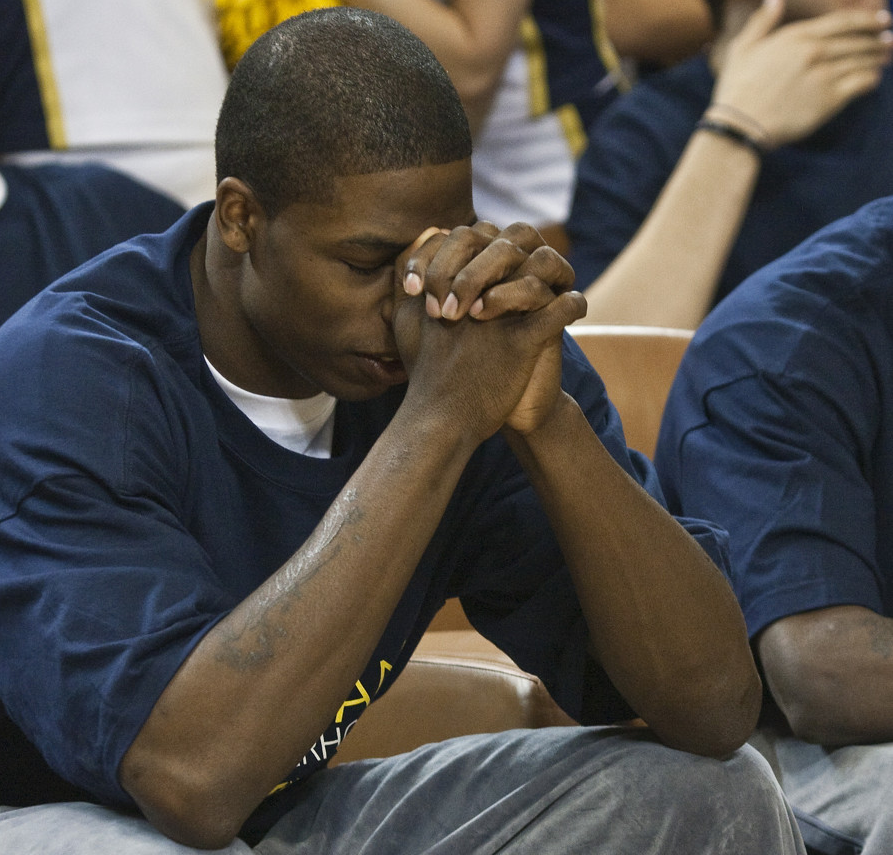

It happens every year on Selection Sunday. I sit in front of my TV and watch pure elation as bubble teams hear their names called by Greg Gumbel. It’s one of the best moments of the year in all of sports. These young men have dreamed about playing in March Madness since they were kids. Now they’ll get their chance to be in the spotlight. To a man, I can pretty much guarantee what’s going through their heads when they learn that they’re in the dance. They picture themselves pulling a Kris Jenkins and hitting the epic buzzer-beater that caps off CBS’ One Shining Moment montage.

For the vast majority, that will never happen. But hope is still alive, and the joy that brings is irrepressible.

For every action there is an equal and opposite reaction. For every bubble team that gets in, there’s another that gets relegated to the NIT, and the joy of the lucky is counteracted by the manifest disappointment of the rejected. The pain etched onto the faces of Monmouth and St. Mary’s players last year was enough to make you question the value of sports en toto.

There’s no avoiding this. Any time you have an apple as tempting as March Madness, disappointment is going to be a fact of life for all but one team. If your dreams aren’t crushed on Selection Sunday, they will be quashed in the Sweet 16, the Elite 8, the Final Four, etc. But it sticks in my craw that some teams are subjected to such disappointment when they shouldn’t be, at least not yet.

Every year, the Selection Committee gets a few things just plain wrong when it’s picking at-large teams, and it has a lot to do with the factors they look at when allocating those 36 bids. (The committee seems to know it, too. Why else would they have brought in advanced stats gurus like Ken Pomeroy and Jeff Sagarin to help change the system?)

What factors does the Selection Committee consider?

Currently, the Selection Committee considers the following factors when picking at-large teams:

- RPI

- An extensive season-long evaluation of teams through watching games, conference monitoring calls and regional advisory committee rankings;

- Complete box scores and results;

- Head-to-head results and results versus common opponents;

- Imbalanced conference schedules and results;

- Overall and non-conference strength of schedule;

- The quality of wins and losses;

- Road record;

- Player and coach availability; and

- Various computer metrics.

The main factor that I (and others) take issue with is RPI (“Ratings Percentage Index”). It was ahead of its time back when it was created in the early 80s, but it’s also an outdated metric that has been surpassed by other formulae in terms of predictive capacity.

What is the RPI, exactly, and what’s wrong with it?

The RPI ranks every Division I team in the nation – all 351 of them – according to the following formula:

(Winning Percentage * 0.25) + (Opponents’ Winning Percentage * 0.50) + (Opponents’ Opponents’ Winning Percentage * 0.25) = RPI score

What’s wrong with ranking teams based thereon, and why are other, more recently developed metrics better?

For starters, the RPI doesn’t account for margin of victory. It treats every win as equal, whether it came by one point or 15. Margin of victory is widely acknowledged to be a solid indicator of a team’s true strength and future success in many sports, not just basketball. As Mark Glickman and Hal Stern noted in their 2016 paper Estimating Team Strength in the NFL, “games won by large margins generally indicate better team strength than games won by, say, an overtime scoring event.”

Second, the RPI over-values strength of schedule and undervalues actually winning. As long as your schedule is loaded with strong opponents, you can have a great RPI while still losing a lot of games. (And, since margin of victory doesn’t come into play, you can be blown out in those games, too.) Just look at the current RPI, which has Xavier and its double-digit losses well ahead of Wichita State (26-4). Schedule those teams against each other on a neutral floor and Wichita State is going to be favored in Vegas (and might be even if the Musketeers were healthy). But Xavier has played the 11th-toughest schedule in the country, and the RPI minimizes the impact of its many (many) losses as a result. (Circling back to margin of victory, Wichita State has dominated lesser opponents lately, but the RPI doesn’t reward them for absolutely pummeling their Missouri Valley Conference competition.)

Third, the RPI doesn’t differentiate between home wins, neutral-site wins, and road wins. Home-court advantage is massively real in college basketball. Case in point: Scott Drew, the coach at Baylor, has lost just as many games in Allen Fieldhouse (the home stadium of the Kansas Jayhawks) as Kansas coach Bill Self. Kansas’ home loss to Iowa State earlier this year was just Self’s tenth loss in nearly 220 games at Allen Fieldhouse. Drew – who’s had his share of quality teams – is 0 for 10 in Lawrence.

Fourth, the RPI is not only a stand-alone factor in the selection process; it leaks into the other factors enumerated above. For instance, “quality of wins and losses” is largely gauged by your record against top-50 RPI teams. This faulty metric is actually doing double-duty.

What should the committee consider instead?

There’s no one perfect statistical metric for comparing teams. But when it comes to stratifying squads based on results to date, we have better options than the RPI, and one or more of them should take the RPI’s place in the committee’s deliberations. The best option, in my view, is the KenPom ratings.

Developed by advanced stats guru Ken Pomeroy, the KenPom ratings are a forward-looking ranking of all the teams in the nation. As the man himself puts it,

The first thing you should know about this system is that it is designed to be purely predictive.

…

The purpose … is to show how strong a team would be if it played tonight, independent of injuries or emotional factors. Since nobody can see every team play all (or even most) of their games, this system is designed to give you a snapshot of a team’s current level of play.

The fact that the ranking is predictive doesn’t mean it can’t be used to assess past performance and help find the 36 most deserving at-large candidates. The stats that go into the equation for the ranking are the stats from the current season. KenPom predicts (pretty accurately) future success based on past performance. In effect, it tells you which teams are the best based on what they’ve accomplished to date. That’s basically the exact same thing the RPI aims to do. But KenPom does it better.

Back in 2013, some smart people at Harvard pitted the two stats against each other using past March Madness results. KenPom was shown to be a much better predictor of who would win.

KenPom has its own flaws. It probably values strength of schedule a bit too much, minimizing the value of winning – which is the whole point of basketball. But it does account for margin of victory, which any self-respecting metric should. It also factors in home-court advantage.

Even if you ignore the predictive performance of the two metrics, the KenPom rankings just look more accurate, superficially. As the committee might say, it passes “the eye test.” For example, Gonzaga (29-1 overall) is the top-ranked team in KenPom. They’re 11th in the RPI, five places below Florida (23-6 overall) whom they already beat. KenPom has the Zags five places higher than the Gators.

Meanwhile, as mentioned, 11-loss Xavier (26th) is well ahead of four-loss Wichita State (40th) in the RPI. The situation is completely flipped in KenPom, where Wichita State sits 10th and Xavier 39th. When the bracket comes out, don’t be surprise if Xavier gets the higher seed. A loss for Wichita State in the Missouri Valley Conference tournament could see them left out of the bracket altogether. Such is the effect of the RPI as things currently stand.

Will it always be this way? Here are my predictions for the 2017 bracket and what lies ahead for the selection process.

Bracket and Selection Process Predictions

Gonzaga doesn’t get a no. 1 seed.

Back when the Selection Committee released its first-ever mid-season rankings, it was clear that they were still relying heavily on RPI. Gonzaga, which was undefeated at the time and owned wins over Arizona, Florida, and Iowa State to name a few, was only ranked fourth by the committee, behind RPI darlings Baylor, Kansas, and Villanova, all of whom had at least two losses at the time. Now with one (admittedly pretty bad) loss on their resume (79-71 to BYU at home), the Zags will find themselves bumped from the one-line in favor of Kansas, Villanova, a Pac-12 power like UCLA or Oregon, and an ACC team (likely North Carolina).

Xavier receives a higher March Madness seed than Wichita State.

It’s shocking that the Shockers are a bubble team. CBS’ Jerry Palm has them on the outside as of Feb. 28. I think they’ll still get in even if they don’t win the autobid from the MVC. But they’ll be one of the last at-larges, and might end up a no. 12 seed for the second year in a row and have to navigate the First Four. Xavier, on the other hand, will be a no. 10 seed or better.

The RPI is removed from the selection process by 2020.

When my predictions above come true, I fully expect continued backlash against the current process, in particular the RPI. While the NCAA doubled down on the controversial metric back in 2013, this year it showed signs that it’s willing to listen. As I briefly touched on already, the committee met with Ken Pomeroy, Jeff Sagarin, and other metrics mavens this year in an effort to refine its approach in the years ahead.

The long-term impact of that meeting won’t be known for a while, but you have to think that committee members left with this important piece of knowledge: the RPI’s place in the advanced-stats world is growing smaller and smaller; as other metrics continue to be tweaked and refined, the stagnant RPI will only become more and more dated, and more and more useless.

The earliest we’re going to see any official changes to the selection criteria is 2018, and I don’t think the RPI will officially bite the dust that early. It’s too ingrained into the system. But its merciful ebb from our lives has begun, and it should be gone for good by 2020.

KenPom will become a stated factor in 2018.

The only way to quiet critics – and improve the system – is to change something. I already said that I think the RPI will stick around until 2020, but I also think we’ll see some alterations as soon as next season. If you listen to as many college basketball podcasts as I do, you know the importance the nation’s pundits place on the KenPom rankings. It’s become the de facto ruling party in the metrics parliament. The committee is going to appease calls for change by expressly including it as a factor in the selection process next year.

Do you agree that the RPI should be put to bed? Give us your two cents – or full dime – in the comments. And stay tuned for part two of my series (March 22) when I’ll be pontificating on how the Selection Committee should seed schools once the 68-team field is set.

Managing Editor

Sascha has been working in the sports-betting industry since 2014, and quickly paired his strong writing skills with a burgeoning knowledge of probability and statistics. He holds an undergraduate degree in linguistics and a Juris Doctor from the University of British Columbia.